If you play Roblox, use Discord, or live in the UK, you may have noticed some recent changes. Namely, that chat features are locked and notices are popping up about “age verification.” Everywhere seems to be cracking down on teen use and “protecting children”.

This isn’t a new fight. Youtubers experienced massive shifts with the advent of Youtube’s crackdown on ads and content amid a COPPA (Children’s Online Privacy Protection Act) settlement back in 2019, and creators on the platform consistently report struggles with the algorithm unfairly targeting certain content. A few years ago, a playthrough from the Youtuber “Coryxkenshin” on the 2022 horror game “The Mortuary Assistant” was age-restricted, while a playthrough of the exact same game by Youtuber “Markiplier” was left unrestricted. The fight, however, has usually been limited to, for lack of a better term, ‘public’ content- platforms like Youtube, Instagram, and Facebook.

Not so anymore. Since the UK’s Online Privacy Act was passed in 2023, there’s been a massive push for companies to be in compliance or risk losing access to a huge chunk of their userbase from the region. But this push isn’t just limited to the UK, it’s starting to creep into American media use. Specifically, Discord- an online gaming platform and messaging app- has started its own push to crack down on “online safety for children”.

Recently, Discord announced a new “teen-by-default” change to all accounts. This would mean that, unless the user of the account verifies their age via submitting an ID or having their face digitally judged to be “overage”, their accounts would be restricted to “teen-only features”- meaning they could not unblur content that Discord considers to be “sensitive” or “inappropriate”, could not access gated channels, and that their ability to use voice chats would be restricted, among other features.

When I was first discovering online communities, Discord’s only requirement was a button push to verify that you were over 13. Now, six years later, Discord risks pushing away both teens and adults by conforming to this “teen-only” default standard and making their built-in NSFW channel function basically unusable.

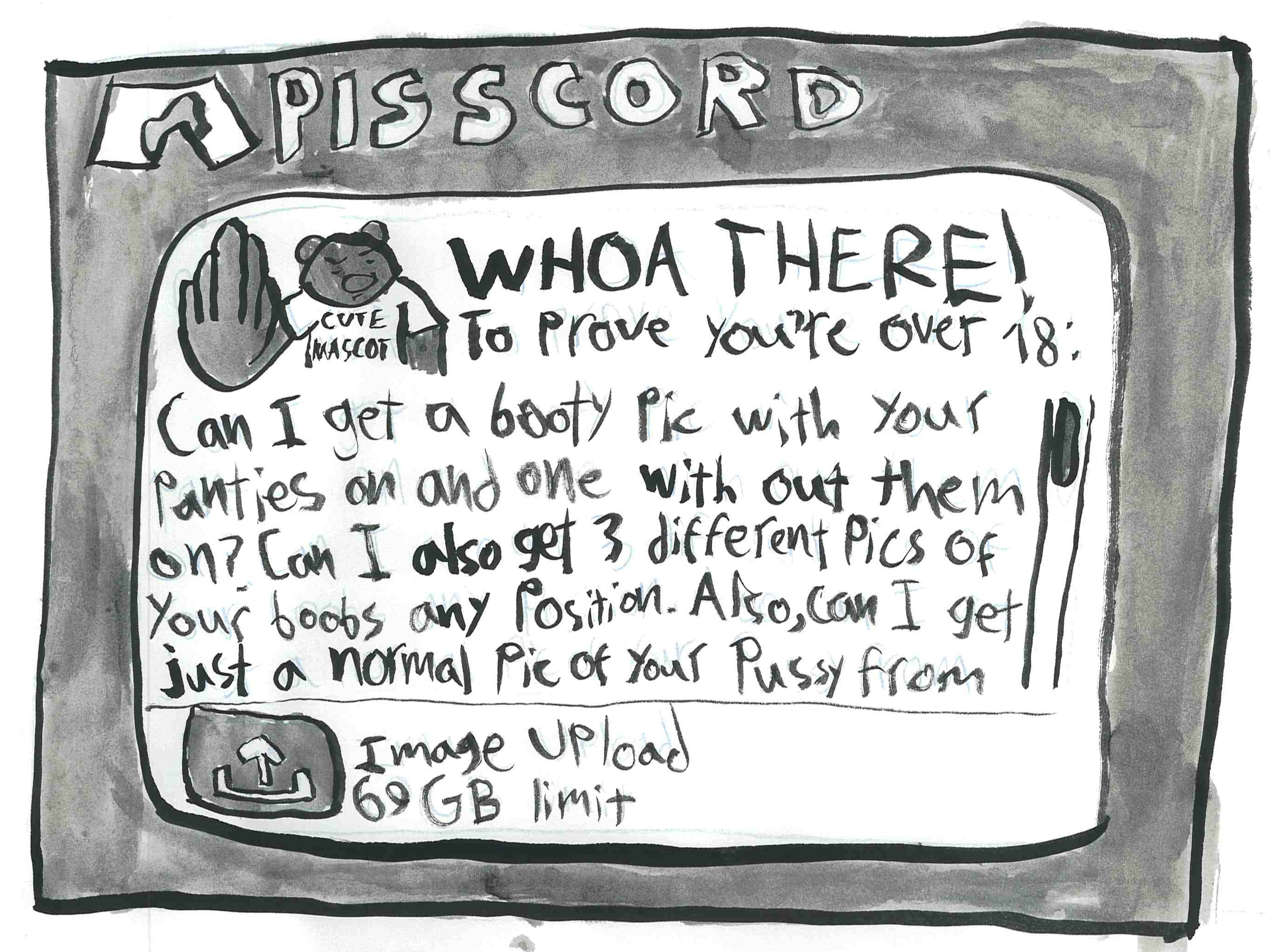

This is a bad idea for several reasons. For one, the multitude of privacy leaks Discord has experienced, as well as the other leaks of personal and sensitive information that have been occurring worldwide with the advent of this trend, make submitting an ID a risky gamble at best. (Discord uses third-party vendors for ID verification.) For another, Discord already has established methods in place for censoring sensitive content. NSFW-only channels, which require a verification of being over-18, are a feature available to any server, and spoiler warnings are quite literally built into the user tools. If these tools become age-gated, they run the risk of not being used at all, which is a bad idea for obvious reasons. For a third: I don’t care if teens see a boob every once in a while. Infringing on people’s privacy rights to make sure no seventeen year old ever knows what sex or a penis is, ever, is Puritanical and unreasonable for 2026.

I must acknowledge that there are, as always, legitimate concerns and risks about the safety of children on these platforms. Scroll Instagram Reels or Youtube Shorts, and either one might present you with a video of a dead body. The jokes about groomers on Discord are ubiquitous, and Roblox has had to contend with an influx of creeps masquerading as children in games aimed for a preteen population.

The answer to this, though, is not to limit expression, and certainly not to forbid children from spaces that are supposed to be for them. When you have concerns about predators in real life, the answer has never been “isolate or exclude children from playgrounds or public events.” Slowly but surely, though, that is what is happening to children’s spaces digitally, whether for the convenience of companies, advertisers’ profit margins, or iPad parenting skills.

Discord seems to be bending to pressure contrary to user wishes. Recently, a survey on where users would like to see AI implemented in the platform was released, and then closed after only a day or two of being active. Many users expressed their disappointment and frustration that Discord, which hosts active online communities of gamers and fans, would try to implement AI into its servers.

Now, Discord’s demand for ID-checks or facial recognition- a method which raises concerns about privacy, data leaks, and the bias of AI in estimating age- is further alienating a frustrated userbase. Already, short-form videos are popping up about alternatives to Discord (including the open-source software “Valor”). People I’ve known and chatted with for years are looking to swap or ditch the platform entirely, and if Discord goes through with this, I can’t say I might not join them.

Image credit: Betty Cavicchia’28

Leave a Reply